Engineering Strategy

Engineering StrategyDORA Metrics Are Obsolete: Here's What Replaced Them

DORA metrics are obsolete in AI-augmented teams. Deployment frequency no longer signals health. Here's the diagnostic framework mid-market operators need instead.

The assumption that engineering capacity scales with headcount was always a simplification. By 2026, it's a liability. A 5-person team today can ship what a 50-person team shipped in 2016 — not because engineers are working harder, but because the architectural unit of value has changed. The bottleneck is no longer how many hands you have. It's how well your senior engineers can direct AI systems, validate their output, and make architecture decisions fast enough to matter.

That's the structural case for the AI-augmented engineering pod: a concentrated unit of three to five senior engineers, each orchestrating specialized AI agents, operating without the coordination overhead that bloats traditional delivery teams into slow, expensive approximations of output.

What follows isn't an argument for doing more with less. It's an argument for doing things differently — and understanding exactly why the old model breaks under AI-native conditions.

Traditional delivery teams spend 20–40% of their total capacity on coordination: standups, sprint planning, backlog grooming, handoff documentation, and ticket triage. That's not waste in the pejorative sense — it's the friction cost of getting information to flow across people who each hold a partial view of the system. Every engineer you add compounds that cost.

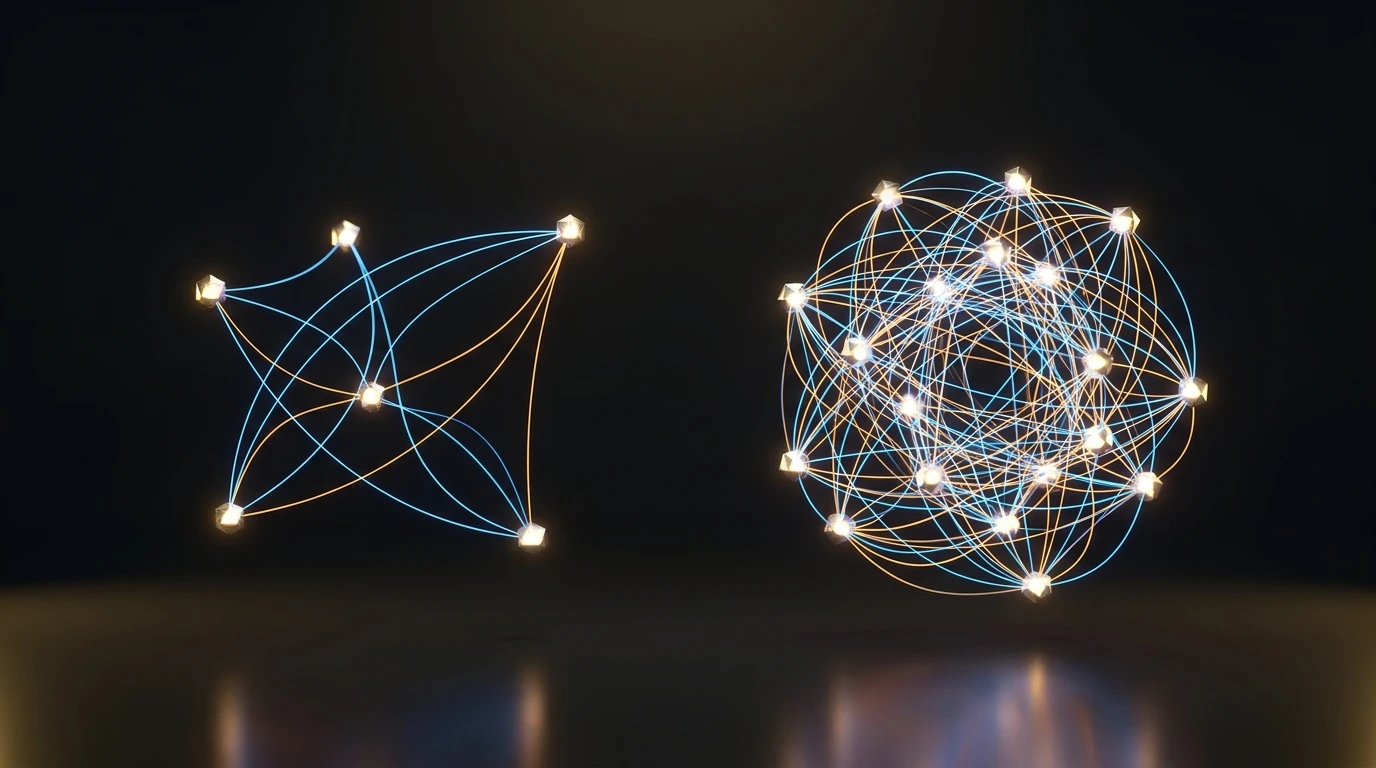

The math is unforgiving. A five-person team manages ten communication paths. Double it to ten engineers and you're managing forty-five. That's not a linear penalty — it's exponential, and it's structural. No process improvement fixes it permanently; you can only manage it until the next re-org.

In an AI-augmented environment, that coordination overhead doesn't just persist — it actively undermines the model's core advantage. When one engineer with an agent swarm can handle what previously required three specialist handoffs, adding more humans to the chain doesn't accelerate delivery. It re-introduces the latency the agents just eliminated. A large team with architecture review boards moves from idea to production in a quarter; a five-person AI-augmented pod has iterated eight times in the same window.

The delivery team model made sense when the constraint was individual human throughput. That constraint has moved. The new constraint is direction-setting, architectural judgment, and quality control — none of which scale with headcount.

Here's the counterintuitive finding that most AI evangelism skips over. Faros AI analyzed telemetry from over 10,000 developers across 1,255 teams and found something damaging: while AI coding assistants measurably increase individual developer output, the correlation between AI adoption and company-level performance metrics disappears at the organizational level.

Developers write more code, parallelize more workstreams, and close more tickets. But the code is getting bigger and buggier, shifting the bottleneck to review. Senior engineers who should be making architectural decisions spend their cycles reading AI-generated PRs for subtle logic errors and hidden dependencies. The throughput gain evaporates upstream.

10% vs. 30%

Teams applying AI only to code generation capture ~10% productivity gains; teams deploying AI across the full SDLC capture 25–30% — the gap is structural, not a tooling problem.

Gartner corroborates this from the top down: over half of developers report AI has improved team output by only 10% or less — because most organizations limit AI to code generation rather than applying it across the full software development lifecycle. Teams that deploy an ensemble of AI tools across requirements, testing, security scanning, and deployment — not just autocomplete in the editor — achieve 25–30% productivity gains by 2028. Code-generation-only approaches yield roughly 10%.

The implication is direct: the gap between a 10% gain and a 30% gain is not a tooling gap. It's a structural one. Organizations that bolt AI onto an existing delivery team structure get marginal output increases and new review bottlenecks. Organizations that redesign the team around AI-native workflows — where senior engineers set direction, AI agents execute, and review is systematic rather than heroic — capture the compounding gains.

The bottleneck in an AI-augmented environment shifts from production speed to direction-setting and quality control. Seniority is no longer a nice-to-have — it's the core structural constraint.

The conventional delivery team model is economically rational under conventional conditions: senior engineers are expensive, so you hire a few and back-fill capacity with mid and junior talent. The seniors architect, the juniors implement. The ratio is a cost lever.

AI breaks that ratio in a specific way. Junior engineers — by definition — lack the system knowledge to evaluate whether AI-generated code is architecturally sound, whether it introduces a subtle race condition, or whether it's the right abstraction for where the system needs to go in six months. They can tell you if the tests pass. They can't tell you if the design is wrong.

Gartner's survey of 300 US and UK organizations found that 56% of software engineering leaders rated AI/ML engineer as the most in-demand role for 2024 — and that generative AI will require 80% of the engineering workforce to upskill by 2027. The upskilling burden isn't symmetric. A senior engineer who understands distributed systems learns to orchestrate agents in weeks. A junior engineer who learns to prompt well has still never debugged a production incident at 2am with a cascading cache failure. Those aren't the same skill set, and AI doesn't close that gap — it widens it.

The AI-augmented pod model doesn't eliminate junior engineers from the industry. It concentrates the value-generating unit at the senior level and eliminates the structural dependency on junior throughput. Work that previously required a junior implementation layer now routes through AI agents — directed by engineers who can validate the output against architectural intent.

The term "neural pod" is architectural shorthand for a specific operating model, not a headcount preference. The structure is:

The critical design principle: AI agents handle execution; senior engineers hold judgment. The moment you invert that — when an engineer is executing and an AI is making architectural decisions without human validation — you've built cognitive debt into your foundation, and it compounds with every sprint.

There is a failure mode that pod advocates underweight. A Berkeley Haas study of 40 workers at a 200-person tech company found that AI caused employees to work faster, take on broader task scopes, and extend their workdays — often without being asked. The velocity increase was real. So was the burnout.

For a mid-market operator, this is a direct risk. The pod model depends on sustained senior judgment. An engineer running at 120% for three months makes architectural decisions under fatigue — and AI-native environments are particularly unforgiving of subtle architectural errors, because agents will faithfully implement the wrong design at ten times the speed.

Pod design, done correctly, includes deliberate pacing guardrails: explicit WIP limits per engineer, rotation of high-cognitive-load work, and a team size floor that prevents single-points-of-failure. A three-person pod is not a four-person pod with one person removed. It's a different risk profile. Know which you're running.

80%

Gartner predicts 80% of the engineering workforce will need to upskill by 2027 as generative AI spawns new roles — making seniority the non-negotiable constraint in pod design.

By 2030, Gartner predicts 80% of large engineering teams will be reorganized into smaller, AI-augmented units. By 2027, 70% of all software engineering leader role descriptions will explicitly require oversight of generative AI — up from less than 40% today. The direction is settled. The question for a mid-market operator isn't whether this shift happens; it's whether you're on the right side of it when it does.

The practical decision framework breaks into four diagnostics:

The kind of team that closes this gap for you isn't a global integrator with a nine-month runway and a 40-person staffing plan. It's a concentrated group of senior architects who design the pod structure, establish the agent orchestration layer, and then hand over a system your internal team can operate — or operate it alongside you. The margin in modern software delivery isn't in the code. It's in the architecture that decides what the agents build.

The direct cost per engineer is higher in a pod — you're paying senior rates across the board. The total cost is lower when you account for coordination overhead, rework from misaligned handoffs, and the extended timelines that large teams carry structurally. A five-person pod iterating eight times per quarter typically delivers more usable output than a twenty-person team completing two release cycles in the same window, at a fraction of the management surface area.

Quality control is the core design challenge of the pod model. AI-generated code is faster but introduces subtle logic errors and architectural drift that junior reviewers miss. Pods address this through systematic review gates calibrated by risk profile — automated gates for low-stakes components, mandatory human-in-loop for payment flows, auth systems, and anything touching external APIs. The Faros AI telemetry finding that AI-augmented code is getting 'bigger and buggier' is a direct warning: review protocols must be designed in, not bolted on.

Three people is a risk profile, not a capability limit. A three-person pod of senior engineers with well-structured agent tooling can handle significant complexity — but has no redundancy. One departure or one engineer on leave changes the risk equation materially. For mid-market operators with continuous delivery requirements, four to five is the practical floor: enough domain coverage to handle architecture, platform, product surface, and security/reliability without single points of failure.