Architectural PoC: A Better On-Ramp Than Discovery

The Problem Isn't the PoC. It's What the PoC Is Proving.

Most AI proof-of-concepts fail for a reason that has nothing to do with the model, the data pipeline, or the team's capabilities. They fail because they were designed to prove the wrong thing. A typical PoC answers: can we build something impressive in a sandbox? An architectural PoC answers: can this work at production fidelity, inside our real constraints, with a governed path forward? Those are not the same question, and the gap between them is where $252.3 billion in 2024 AI spending quietly dissolved.

Gartner put a number on the wreckage in July 2024: at least 30% of generative AI projects will be abandoned after proof of concept by end of 2025. S&P Global's survey of 1,000+ enterprises found the average organization scrapped 46% of AI PoCs before they ever reached production. RAND puts the overall AI project failure rate above 80% — twice the failure rate of non-AI technology projects. These aren't flukes. They're the predictable output of a structurally broken engagement model.

The conventional discovery-then-PoC sequence — a few calls to align on requirements, a prototype to "validate the concept," and then a proposal for the real build — is optimized for the vendor's sales funnel, not the operator's architecture. What mid-market operators need isn't another slide deck showing GPT-4o summarizing their documents. They need to know whether their data estate can support retrieval-augmented generation at scale, whether their latency envelope accommodates agentic orchestration, and whether their security posture can survive the expanded attack surface that comes with tool-calling agents. None of that gets answered in a discovery call.

Why Discovery Calls Are Architecturally Useless

Discovery calls are good at surfacing business pain. They are poor at surfacing architectural risk — and architectural risk is where AI projects die. The conversations sound productive: stakeholders describe the process they want to automate, vendors nod and take notes, someone draws a box-and-arrow diagram with "LLM" in the middle. Then the PoC begins in a clean sandbox, with sample data, no auth layer, no rate limiting, no PII handling, and no integration with the six upstream systems that actually own the data.

This is what S&P Global calls "pilot paralysis" — launching PoCs in sandboxes without a clear path to production. The sandbox prototype looks compelling in a demo. It falls apart the moment someone asks: how does this handle a 90-day data retention policy? What happens when the embedding model's context window is exhausted by a single document? Who owns the audit log when the agent takes a destructive action?

Forrester's April 2025 analysis of AI-augmented enterprise architecture makes the point sharply: architecture review boards, meant to ensure alignment, are increasingly seen as bureaucratic bottlenecks because they engage too late — after build decisions are already made. The architectural PoC moves those governance questions to the front. Not as a compliance checkbox, but as a design input.

46%

The average organization scrapped 46% of AI proof-of-concepts before they ever reached production, according to S&P Global's 2025 survey of 1,000+ enterprises.

What an Architectural PoC Actually Looks Like

The distinction is not cosmetic. An architectural PoC is scoped, time-boxed, and production-intentional from the first commit. Every decision made during it is a decision that would need to be made anyway — it's just made earlier, more cheaply, and with less organizational inertia to undo.

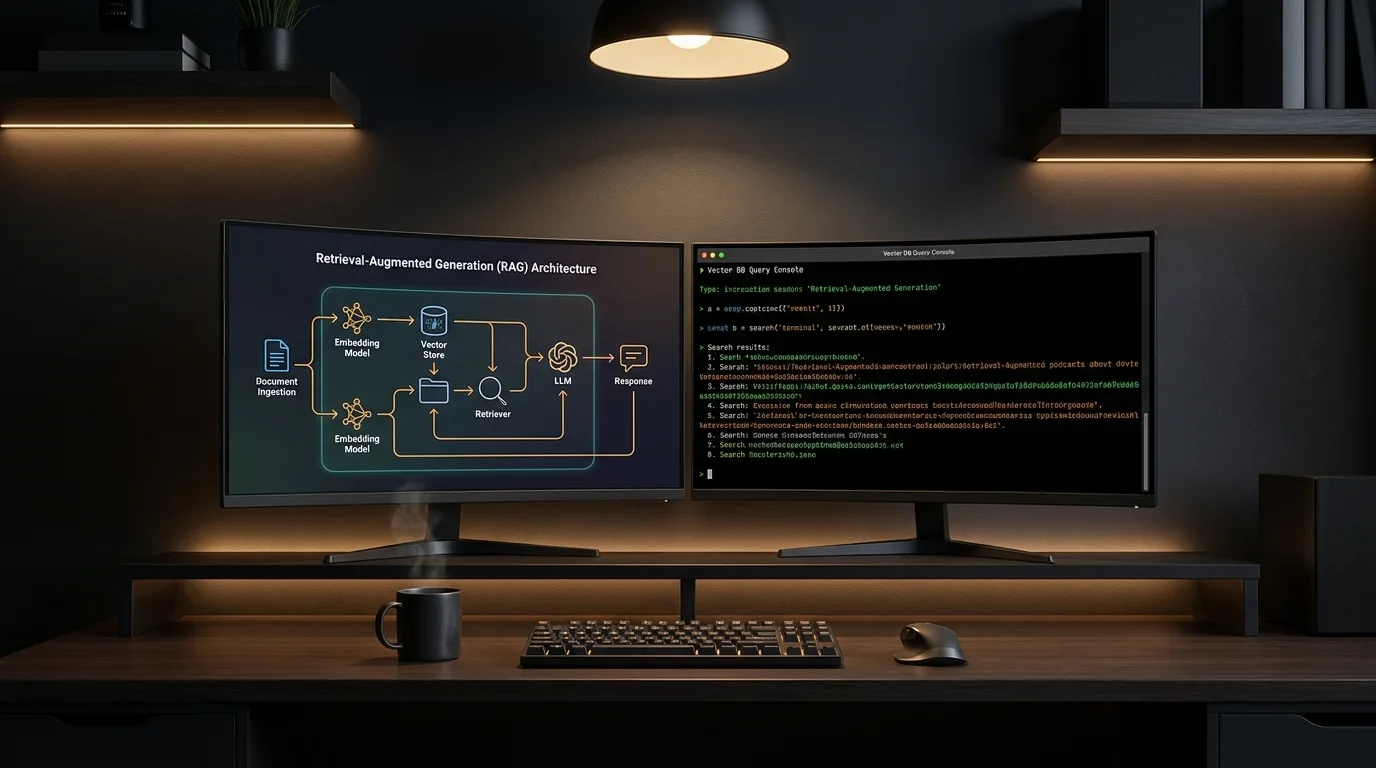

Keyhole Software's engagement with a travel media company is a clean example. Rather than building a capabilities demo, they framed the engagement explicitly as a "production-quality proof of concept" using a RAG architecture. The primary deliverable wasn't a polished interface — it was a scalable, governed architectural pattern: chunking strategy, embedding model selection, retrieval pipeline design, hallucination controls, and a data governance layer that accounted for PII from day one. The demo was almost incidental. The architecture was the product.

This is the operational difference. In a standard PoC, the architecture is deferred. In an architectural PoC, the architecture is the PoC. You're not proving that AI can summarize documents. You're proving that this retrieval pipeline, with this embedding model, against this data estate, behind this auth layer, under these latency constraints, can support the use case. That's what makes the output transferable to production instead of throwaway.

The Four Dimensions That Must Be Validated Simultaneously

AWS Prescriptive Guidance on generative AI lifecycle excellence makes an important structural point: a PoC must validate business value, data readiness, technical feasibility, and risk mitigation simultaneously — not sequentially. Sequential validation is where projects stall. You prove business value in week two, discover data readiness problems in week six, and then spend months arguing about whether the project is salvageable.

- Business value: Every technical metric must map to a business outcome via a defined measurement framework. AWS recommends the OGSM model (Objectives, Goals, Strategies, Measures) precisely because tracking technical metrics disconnected from tangible business impact is "a common failure point in generative AI projects." If you can't define what a 200ms latency improvement is worth in dollars or hours, you haven't completed this dimension.

- Data readiness: This is where most PoCs discover their actual problem. Is the source data structured enough for reliable retrieval? Is it current enough to avoid stale answers? Does ingesting it into a vector store create any data sovereignty or contractual issues? These questions surface immediately in an architectural PoC because the pipeline is real, not mocked.

- Technical feasibility: Not "can an LLM do this" — that question was answered in 2023. The real questions: What is the acceptable hallucination rate for this use case, and how do you measure against it? What does failure look like, and does the system fail gracefully? Can the orchestration layer handle the concurrency profile of actual production traffic?

- Risk mitigation: HSO's 2026 analysis puts it directly: "the most expensive mistake in AI is building something impressive that can't be repeated, audited, or hardened for production." Agentic AI systems in particular expand the attack surface in ways that sandboxed PoCs never expose — tool-calling agents that can write to databases, send emails, or trigger external APIs need security patterns baked in from the first design decision, not retrofitted after the demo impresses a steering committee.

The Contractual Logic: PoC as Mutual Qualification

There's a second dimension to the architectural PoC that most operators underestimate: it qualifies the engagement relationship, not just the technology. GTMnow's practitioner analysis of POC frameworks makes the case that codifying success criteria into a binding framework — where if the builder delivers against defined criteria, the buyer proceeds — transforms a PoC from a speculative exercise into a contractual milestone. The Acme Inc. case study they document showed that moving from unstructured POC management to playbook-driven evaluation materially lifted conversion from technical win to business win.

For mid-market operators, this cuts both ways. You learn whether the team building the PoC can actually operate at production fidelity under real constraints. And the team building it learns whether your data estate, your security requirements, and your internal decision-making process are compatible with a fast production path. A discovery call can't surface either of those things. Two weeks of architectural work will.

This is the honest commercial logic behind the architectural PoC as an on-ramp: it compresses months of misalignment risk into a contained, scoped engagement with defined exit criteria. If the architecture holds, you have a production blueprint and a qualified team. If it doesn't, you've spent two weeks instead of nine months finding out.

88%

88% of AI proof-of-concepts never reach wide-scale deployment — a structural failure driven by sandbox-first, production-never PoC design.

What Separates PoC Purgatory from Production Velocity

The 88% of AI PoCs that never reach wide-scale deployment — a figure cited across multiple 2025 CIO research compilations — don't fail because the technology doesn't work. They fail because the conditions for production were never established during the PoC phase. Specifically:

- No defined accuracy threshold. What hallucination rate is acceptable for a customer-facing RAG system? 2%? 0.1%? If you didn't define it before building, you'll argue about it forever after.

- No operational handoff plan. Who owns the embedding pipeline in production? Who monitors drift when the underlying model is updated? Consulting engagements that produce PoCs without transferring operational capability are one of the identified key failure drivers in the 2026 Talyx aggregation of BCG, RAND, and Stanford HAI data.

- No governance layer. Data ownership, PII handling, audit logging, and access controls are not post-launch concerns. In regulated industries, they are pre-build constraints that shape every architecture decision from the schema design outward.

- No latency budget. An LLM response that takes 4.2 seconds is unacceptable in a synchronous customer-facing flow and perfectly acceptable in an async document processing pipeline. If the PoC didn't model real traffic patterns, you don't know which one you built.

Gartner's 2024 data shows only 48% of AI projects make it into production, with an average of 8 months from prototype to production deployment. An architectural PoC won't eliminate that runway entirely — production hardening, load testing, and organizational change management take real time. But it can cut the rework cycle dramatically by ensuring that what enters the production track is already architecturally sound, not a sandboxed prototype that needs to be rebuilt from scratch once the real constraints are applied.

The Team Structure That Makes This Work

An architectural PoC requires a different team composition than a typical discovery engagement. You need senior architects who can make real design decisions — not analysts who document requirements and hand them to a delivery team later. The handoff chain is itself a failure mode: every translation between the person who understands the constraints and the person making the code decisions introduces noise and deferred risk.

A concentrated team of two to four senior engineers with end-to-end ownership — from data pipeline design to security posture to deployment architecture — will consistently outperform a larger team organized around specialization and handoffs. The architectural PoC is where this team composition proves its value most visibly: decisions that would take weeks to route through a traditional engagement model get made in an afternoon by people with the context to make them correctly.

This is the kind of team that would run an architectural PoC for your RAG-based internal knowledge system, your agentic workflow automation, or your LLM-augmented data pipeline — and hand you a production blueprint at the end of it, not a slide deck asking for budget to start the real work.

A Diagnostic: Should Your Next Engagement Start With an Architectural PoC?

Run through this before your next AI vendor conversation:

- Data readiness unknown? If you don't know the actual quality, latency, and governance profile of the data the system will consume, a discovery call won't surface it. An architectural PoC with a real ingestion pipeline will.

- Accuracy threshold undefined? If you can't state the acceptable error rate for the use case, you're not ready for a build engagement. An architectural PoC can help you discover what threshold is achievable and what it costs to hit it.

- Security and compliance requirements real? If your org operates under HIPAA, SOC 2, or any data residency constraint, those requirements must be designed in from the first commit. An architectural PoC is where you find out whether a candidate architecture can satisfy them — not after a six-month build.

- Prior PoC already abandoned? If a previous effort stalled in purgatory, the answer is not another discovery call. It's an architectural review of what was built, why it didn't cross to production, and a scoped re-engagement with explicit production criteria from day one.

- Team you're evaluating can't show production examples? Architectural PoC work leaves artifacts — architecture decision records, infrastructure-as-code, schema designs, security model documentation. If the team you're talking to can only show demos, that's a signal.

The 30% abandonment rate Gartner projects isn't random. It's the predictable outcome of PoCs that were never designed to survive contact with production. The architectural PoC is not a more expensive discovery call — it's a cheaper way to find out whether a six-figure build is worth starting, and what it needs to look like when it is.

Frequently Asked Questions

How long should an architectural proof of concept take for a mid-market AI project?

Two to four weeks is the right envelope for most mid-market architectural PoCs. Any shorter and you're not exposing real constraints — you're building another sandbox demo. Any longer and you're doing the production build, just without calling it that. The goal is a scoped, time-boxed engagement that produces an architecture decision record, a working pipeline against real (or representative) data, and defined success thresholds — not a polished UI.

What's the difference between an architectural PoC and a traditional pilot?

A pilot tests user adoption and operational fit after the architecture is already committed. An architectural PoC tests the architecture itself — data readiness, latency envelope, security posture, integration points, and governance model — before significant capital is spent. Pilots are expensive to abandon. Architectural PoCs are designed to be abandoned cheaply if the architecture doesn't hold, which is exactly why they're more valuable as an on-ramp.

How do we define success criteria for an architectural proof of concept?

Start with three dimensions: a measurable accuracy or reliability threshold (e.g., hallucination rate below 1.5% on domain-specific queries), a latency budget tied to the actual UX or workflow context (e.g., p95 response under 800ms for synchronous flows), and a governance checklist that reflects your real compliance environment — PII handling, audit logging, data residency. If you can't define these before the engagement starts, the first week of the PoC should be spent defining them with the architecture team.

Sources

- Gartner: 30% of Generative AI Projects Will Be Abandoned After PoC by End of 2025

- Why Most Enterprise AI Projects Fail — and the Patterns That Actually Work

- AI Proof of Concept (PoC): Guide for Businesses

- Architecting a Successful Generative AI Proof of Concept — AWS Prescriptive Guidance

- Enterprise Generative AI Proof of Concept Using RAG Architecture — Keyhole Software

- The Augmented Architect: Real-Time Enterprise Architecture in the Age of AI — Forrester

- Sales Proof of Concept (POC): 5 Ways to Drive More Revenue — GTMnow

- Why 90% of Enterprise AI Implementations Fail (2026) — Talyx